Clustering Guide (Old)

Threat Response clustering guide¶

Proofpoint Threat Response platform can be deployed and configured as a high-availability (HA) or fail-over cluster. This provides incident responders with the ability to configure the platform in such a way that potential downtime is significantly reduced or possibly eliminated.

The high-availability configuration follows a master/standby mode with each server functioning as standalone system sharing a management IP address. This provides a seamless experience for the end users of the platform. If the server currently tasked with the master role should become unreachable the user will be transferred to the standby server. The standby server will then assume the master role. When the previous master server is brought back online it will assume the standby role.

Configuration requirements¶

- Threat Response servers that are to be members of the cluster should reside on the same subnet and those network addresses should be assigned to the

Eth0interface - The cluster management IP address should reside on the same subnet as the Threat Response servers

- There should be open network communication between the cluster members to ensure that the database is able to be replicated

- Credentials for individual servers should be configured and properly recorded

- All of the cluster members should be configured individually for the remote authentication source if account authentication is to be configured to “remote.” This prevents failed login attempts in the event that a member server without this configuration assumes the master server role.

Initial cluster configuration¶

Access the Console Management interface for the initial cluster member using admin level account.

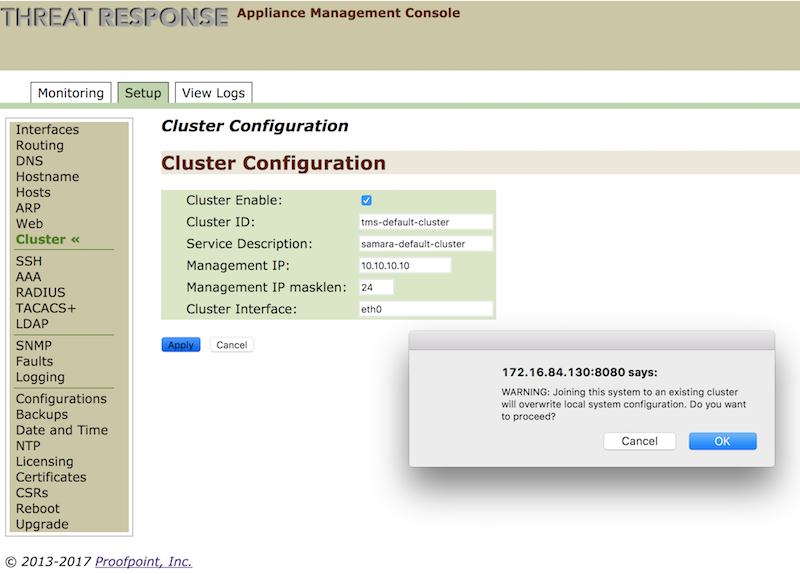

- Navigate to the

Setuptab - Select

Clusterin the side menu - Under

Cluster Configurationenter theCluster ID,Service Description,Management IP,Management IP subnet mask lengthand the cluster interface. - Check mark the

Cluster Enablecheckbox - Click the

Applybutton to apply the settings.

Warning pop up

A warning box will appear stating that joining the system to an existing cluster will overwrite local system configuration. Click Ok to proceed

- Click the

Savebutton

Warning message

There may be a warning stating that master unknown. This can happen when initializing the cluster and should clear once the configuration is saved as the server will assume the master role.

- After clicking

savethe console manager should now reflect the number of nodes in the cluster and the number of cluster nodes that are running.

Cluster configuration information¶

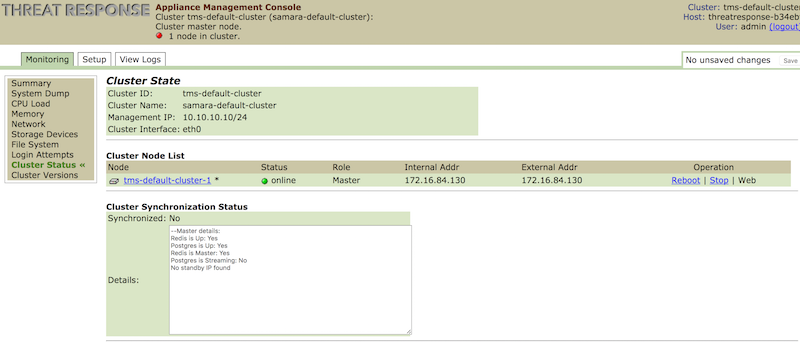

Once the initial cluster configuration is done, the Console Management GUI will reflect information about the configured cluster.

- Select the

Monitoringtab. - Check the cluster state information using

Cluster Statussection.

Cluster status section provides the following information:

Status– online or offlineRole– The cluster roll that the server currently holds- Master/Standby/Normal- Master – The server that is currently holding the master role and is interacting with end users.

- Standby – The server that is currently holding the standby role and will assume the master role should that server become unresponsive.

- Normal – A server that is a cluster member but does not currently hold the master or standby roles. When the standby server assumes the master role one of the servers with this designation will assume the standby role.

Internal Addr– This is the internal IP address assigned to network interface that was designated in the cluster settings.External Addr– This is the external IP address that is assigned to an interface that is not designated in the cluster settings. This setting will only differ from the internal address if the server has multiple network interfacesOperation– This provides interaction with the individual systems, specifically Reboot/Stop/Web options- Reboot – Selecting this option will reboot that cluster node. It’s important to realize that rebooting a system with the master/standby role will cause that role to be transferred to another server if available.

- Stop – Selecting this option will power down the system. This will require the system to be restarted via the ESX interface. In addition, as with rebooting, it will cause a system with the master/standby role will cause that role to be transferred to another server if available

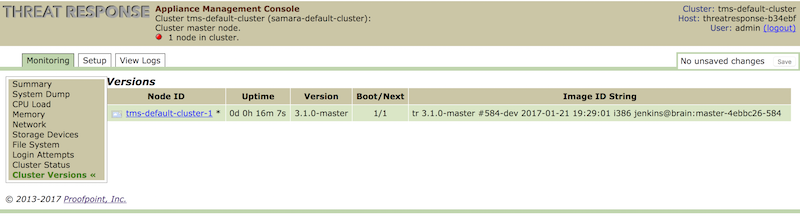

Cluster versions section provides information about the cluster run-time metrics (uptime, version, etc.)

There will also be additional information in the page header displaying the Cluster ID, Service Description, the Cluster role that the specific server has, the number of nodes currently in the cluster, the host name and the logged in account.

Additional cluster member configuration¶

In order to add Threat Response appliance to the existing cluster, admin users need to perform the following:

- Navigate to the

Setuptab of the Appliance Management Console - Select `Cluster in the side menu

- Under

Cluster Configurationenter theCluster ID, theService Description,Management IP, theManagement IP subnet mask lengthand the cluster interface. - Check mark the

Cluster Enablecheckbox - Click the

Applybutton to apply the settings. - A warning box will appear stating that joining the system to an existing cluster will overwrite local system configuration. Click

Okto proceed - Click the

Savebutton

After the configuration has been saved and the server has become a cluster member, new message will appear in the page header stating the system will redirect the user to the cluster master. This will happen automatically unless the ‘local’ hyperlink is selected. This is the process that’s used to access the individual local server settings in the future.

Importing standby token¶

Once you add the additional cluster member, the final step to complete the configuration is to export security token from the standby cluster appliance and import it into the active cluster appliance.

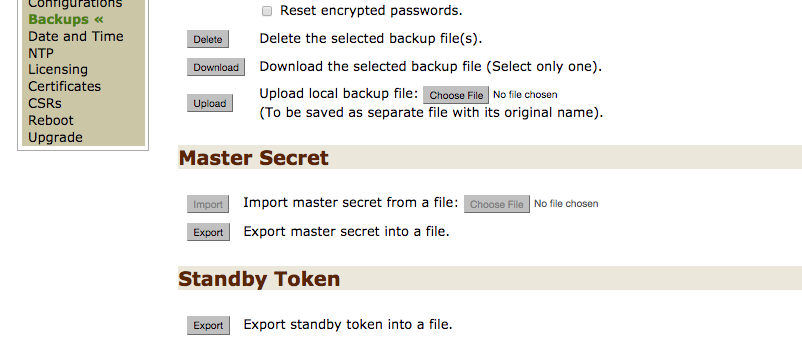

To export the security token from standby appliance, perform the following steps:

- Log in to Threat Response Management console for standby appliance

- Navigate to

Setup>Backupsmenu section - In the

Standby tokenpage section, click onExportbutton to export standby token into a file - Save file on the hardisk.

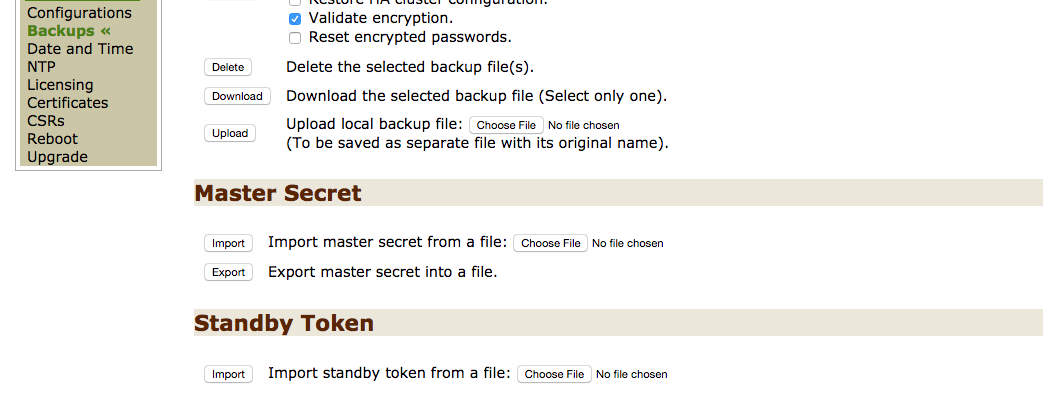

To import the security token into active appliance, perform the following steps:

- Log in to Threat Response Management console for active appliance

- Navigate to

Setup>Backupsmenu section - In the

Standby tokenpage section, click onImportbutton to import standby token from a file - Choose the file that you exported in the previous step

- Save the configuration

After all these steps are performed the cluster configuration will be set up, and the status will turn to green.¶

Note

To upgrade a clustered system running an older version of PTR/TRAP (4.6 and below), refer to the Upgrade Guide.